How to Reduce Amazon EC2 Costs - part 1

In this two-part series we’ll talk about a subject which we get asked about quite often: "How do I reduce my Amazon EC2 costs?"

Welcome back to part 2 of our series on “How do I reduce my Amazon EC2 costs?". Last time we covered the areas that will usually deliver the quickest wins, and the majority of your savings: discount strategy, Spot instances, and rightsizing. How much these will save you obviously depend on your specific environment, and today we have the other key methods for optimising your spend.

Let's talk now about the design of your platform to reduce data transfer charges. This is a point that catches people out sometimes because AWS charge whenever data leaves their environment. Depending upon what you designed from an input-output perspective, you can have situations where you're transferring data from one region or availability zone outside of Amazon Web Services, and incurring significant data transport charges. So you need to set up your virtual private networks and your interlinks between regions to make sure that your data transfer charges are reduced to a minimum so that you're only being charged when data really needs to leave the AWS platform. This can be a lot of money for certain applications and for some companies.

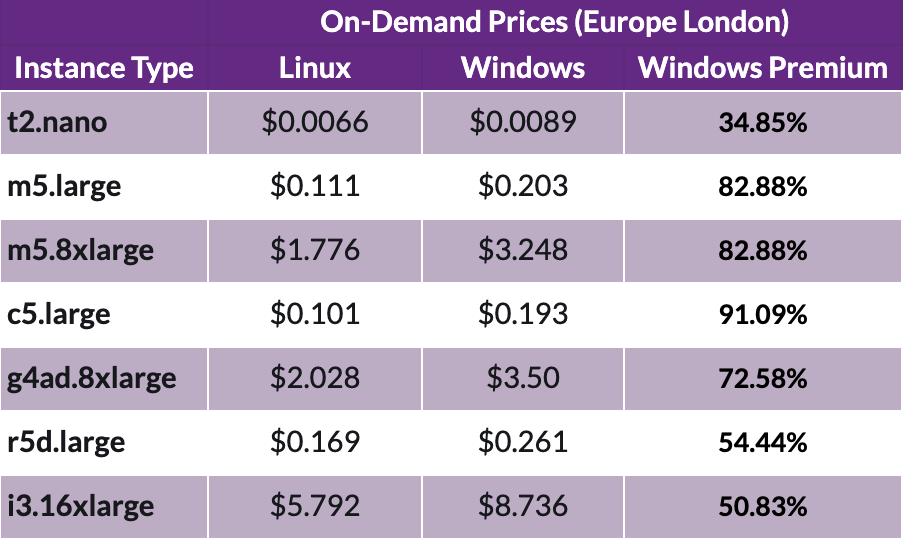

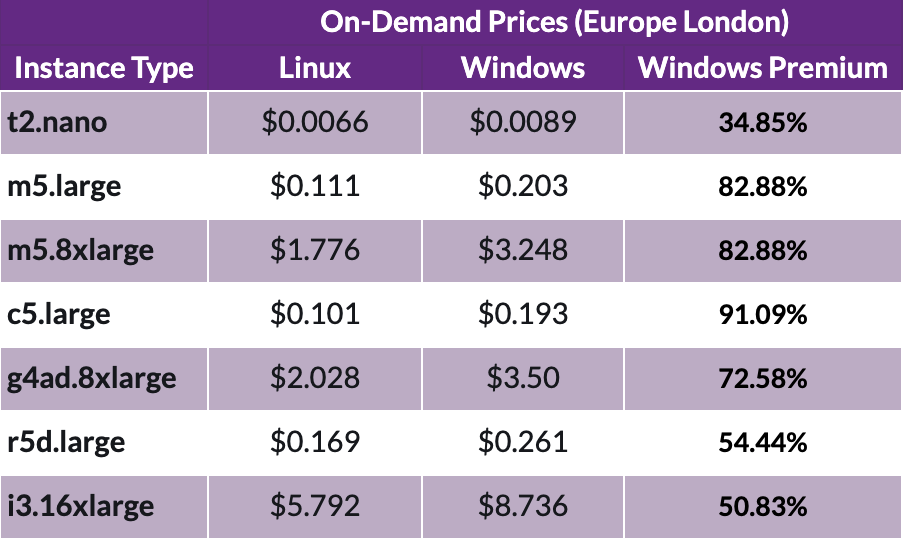

Next, we're going to look at the operating system, Windows versus Linux. If your application were run on Linux, then it's going to be a lot cheaper than running it under Windows. Windows works really well in AWS, but because of the Microsoft licensing Windows instances typically cost 70-90% more than the cost of a Linux instance. You get less flexibility when it comes to how reserved instances work.

There's another element in this, which is that on top of the fact that Windows EC2 instances are double the cost of Linux , the savings that you get from reserved instances and savings plan are approximately half those that you get from Linux. If you're in a fully optimized environment, you could end up paying a lot more money for Windows than Linux. Therefore it's a good idea to test and run your instances in Linux wherever possible over Windows. That also includes running SQL server in Linux versus Windows, which at the lower levels of SQL server can make a real difference. It doesn't make as much of a difference at high levels because the cost of the license outweighs the savings on the actual platform.

Let's talk now about auto-scaling and scheduling. AWS have some great features to create auto-scale groups and schedule those so that if you have a predictable cadence around the usage and the utilization of your workloads, then you can scale up and scale down according to timing. You can also scale up and scale down according to load on the systems, but this does require applications to be able to shut down gracefully and start up very quickly. This highlights the importance of cloud-native application re-engineering, which you may need to do.

Another practice that goes along with this is setting up your infrastructure as code. This means using tools like AWS CloudFormation and Terraform and a number of others, which enable you to very quickly spin up entire environments from a pre-configured set of rules and conditions that can save a huge amount of time and effort. However, it also opens up new opportunities for cost reduction, for example, you can shrink and cut down an entire platform overnight, and then just spin it up the next day. This has both security benefits and cost benefits as well. So building in an infrastructure as code capability into your platform can be a real cost saver, as well as a time saver.

Data must form the foundation of your cost-reduction plan within AWS. As part of that you should be setting tags to make sure that you understand what your usage is that different workloads are being put to. Those tags might include things like which customer they are allocated to, which products or accounts, or the purpose of that instance. It may be binary things such as whether this is a candidate from right sizing or whether the customer is paying for specific capacity and so forth. Using this tagging data you can quickly identify where your costs are, and where are your best candidates for making reductions.

Having an effective data platform is critical in helping you to know where your costs are going, and whether your services change on a month to month basis. You may see that one service suddenly spikes over a period of days or weeks, and the costs are going up because of something that hasn't been spotted, or something that has been implemented incorrectly. Because of this, a data platform is crucial if you want to cost-optimize.

Governance is an important part of your cost planning and cost optimization strategy. It’s too big a topic to go into in detail here, but fundamentally it’s important to make sure that people can't spin up instances unnecessarily and then forget to stop them. You need to have very strict rules around shutting instances down around development platforms. Often developers or operations people don't understand how much the platform is costing, so education should also be part of that governance process

Finally, let's talk about running workloads in containers ex. kubernetes or docker. It may be that you're creating those containers in EC2, which is relevant to this article. If an application is not written to be cloud-native or properly cloud-aware, you can often simulate or obtain cloud-native features by running those application servers in containers.

Containerization is a clever and effective way of bringing costs down; making the most of your CPU and memory usage by using every last cycle available to you rather than having to have a large hangover, because a workload isn’t quite small enough to run in a certain instance type. Meaning you might have to double the size of an instance just to squeeze out the extra few percent of performance.

Containers get around that problem and lots of other problems like it. They can significantly reduce the amount of time it takes to deploy, and also the density with which you can pack your resources into a given EC2 environment. Within AWS you can switch from EC2 into EKS which is their managed container service.

Implementing these practices will enable you to ensure that your EC2 costs are as lean as possible and that your implementation has increased resilience to react to changing demand and usage.

If you would like to learn what specific steps to take to lower your own organisation’s EC2 spend, and that of other AWS resources, then book a free call with us here.

In this two-part series we’ll talk about a subject which we get asked about quite often: "How do I reduce my Amazon EC2 costs?"

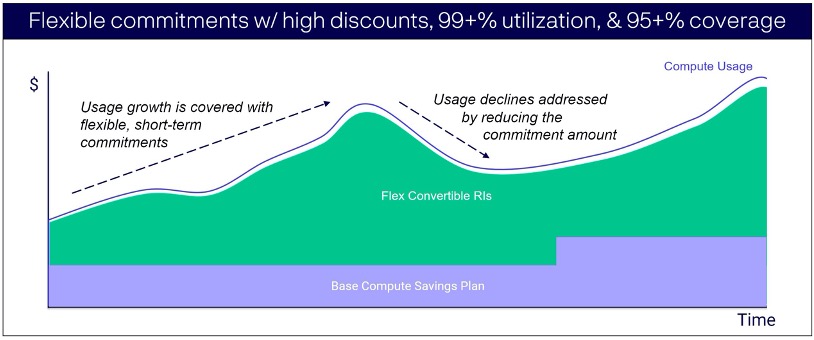

Savings Plans vs Reserved Instances Back in October 2019, AWS introduced Savings Plans, and a belief that Reserved Instances are dead began.

In August you were probably taking some much needed time off, possibly discovering new local delights, or forgoing flights for a staycation. As such...